When people say “AI makes MCQs low quality”, what they usually mean is this: AI makes it easy to produce questions without doing the boring parts of assessment design. Those boring parts are exactly where validity and fairness get protected. The fix is not to avoid AI. The fix is to force a workflow where AI can generate drafts, but it cannot bypass blueprinting, item-writing rules, and review.

If you want your tutorials and MCQs to feel sharp (and not like recycled trivia), start by treating AI like a junior assistant. It can draft, but you set the spec and you do the final QA.

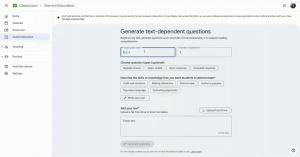

The first habit that separates good item banks from messy ones is a simple blueprint. Before generating anything, decide what you are actually sampling: which topics, which CLOs, and what cognitive level (C1, C2, C3). Without this, AI will “average out” into generic definitions and buzzwords. With a blueprint, you can tell the AI exactly what to produce and how many items per topic, then check coverage in seconds.

A practical blueprint can be as simple as this:

| Topic | CLO | Bloom level | Format | # items |

|---|---|---|---|---|

| Topic A | CLO 1 | C1–C2 | MCQ | 6 |

| Topic B | CLO 2 | C2–C3 | Scenario SAQ | 4 |

| Topic C | CLO 3 | C1–C2 | MCQ | 6 |

Once you have the blueprint, the next quality leap is counterintuitive: do not ask AI to write questions first. Ask it to write the stimulus first. In other words, generate short mini-scenarios, short datasets, short dialogues, short “case vignettes”, then generate questions from those. This does two things. First, it reduces the “textbook copy” vibe. Second, it naturally pushes you from pure recall into application because a scenario gives context that a good student can reason from.

Here’s the exact prompt style I use for that stage:

Generate 8 short Malaysian-context mini-scenarios (80–120 words each) aligned to these topics: [topics]. Each scenario must include 2–3 concrete details (numbers, constraints, market context) and should allow at least one application-level question (C2–C3). Avoid brand defamation and sensitive personal data.

Now, when you move from stimulus to MCQs, you need to lock down item-writing rules. This is where most AI-generated MCQs fail: vague stems, two correct answers, distractors that are obviously wrong, and giveaway patterns (one option much longer, or the only specific one). Standard item-writing guidance is consistent on a few points: keep options limited (often four), ensure one best answer, and avoid gimmicks like “All of the above” and “None of the above” because they distort what the item is measuring.

So your instruction to the AI should read like a specification, not a casual request. For example:

Using the scenario below, write 6 MCQs:

Rules:

• 4 options (A–D)

• one best answer only

• no “all/none of the above”

• avoid negative phrasing unless unavoidable

• options parallel in length and grammar

• distractors plausible and based on typical student misconceptions

After each MCQ, provide:

• Correct answer

• 2-3 sentence rationale

• 1 sentence why each distractor is wrong

Cognitive level: [C1/C2/C3]

Scenario: [paste]

That “rationale + why distractors are wrong” requirement is your fastest quality filter. If AI cannot justify the key cleanly, the question is usually ambiguous. If the distractors are not plausibly wrong, the question will not discriminate. If two options can be defended, you will get student complaints and a moderation headache.

At this point, the most important “human” upgrade you can add is to design distractors from real student errors. The best distractors are not random. They are common misconceptions, common calculation slips, or a correct concept applied in the wrong place. If you have taught the module before, you already know a few. Feed them into the prompt. You will immediately get better distractors and fewer silly options.

After generation, do a quick fairness and clarity pass. You are checking for hidden assumptions, unclear pronouns (“this”, “they”), double-barrelled stems, cultural references that only some students will understand, and language complexity that is not part of the learning outcome. This is not being “soft”. It is good measurement practice: tests should support defensible interpretations and fair use.

Then pilot lightly. You do not need full psychometrics to catch obvious issues. Even a quick check with a colleague or a small group of students will reveal the usual failures: everyone gets it right instantly (cueing or too easy), everyone gets it wrong (unclear or misaligned with teaching), or students argue about the key (multiple defensible answers). If you later do item analysis, great, but even this informal pilot step saves you from deploying broken items.

One more point that’s worth making explicit in a blog post: tutorials and MCQs are not just assessment tools, they are learning tools. When used as low-stakes practice, frequent quizzing supports retention through retrieval practice, which has strong evidence behind it. That is why it’s worth building a high-quality question bank in the first place. You are not just “testing”, you are teaching.

If you want a simple rule to keep yourself honest, use this 20-second checklist per item. If any answer is “no”, revise it:

- Does it clearly map to a specific topic and CLO?

- Is there one best answer, with no “depends” arguments?

- Are distractors plausible and same-category, not jokes or giveaways?

- Could a strong student answer without guessing what you meant?

- Does the rationale explain the concept, not just restate the option?